I am going to argue that the Zimmerman verdict (for the shooting of Trayvon Martin) was the correct one. You will either agree with me or you will not. And then I will argue that either way, it doesn’t matter in the slightest to most people’s lives.

Let me just say, before you dismiss this post entirely because of some preconceived notion about my politics, then I am very liberal on social issues. (As I’ve mentioned in the past, I literally don’t have an opinion on many complicated economic issues.) I’m strongly supportive of privacy rights, voting rights, women’s rights, LGBT rights, and animal rights. I think the idea of building a giant wall to keep out every illegal alien is absurd. I am for the legalization of marijuana and for the decriminalization of other drug use in general. I think man-made global warming is a self-evident fact. I think big monolithic corporations, in the long term, have a negative effect on the happiness of the masses because they operate as entities without conscience, self-awareness, or humanity.

But when the Zimmerman verdict came back on July 13 as not guilty, I wasn’t surprised. I wasn’t even outraged. I just sort of shrugged and moved on.

Granted: there is still racism in this country. I will even argue that there are often two disparate systems of justice in the U.S.: one for whites, and one for non-whites. But still…what does that have to do with the Zimmerman verdict?

Scenario 1. Let’s suppose the George Zimmerman is a total card-carrying KKK racist. He may be, he may not be…I don’t have any evidence one way or another. And most of you don’t, either. But let’s just suppose he is. Let’s say he follows Trayvon Martin looking for trouble; hoping for a confrontation; hoping to scare the boy. A scuffle ensues and Martin is shot.

Is that murder?

I’m not a lawyer, but it doesn’t sound like murder. Manslaughter seems a better fit.

Scenario 2. Let’s be more realistic. Let’s assume Zimmerman is a racist, but not the frothing-at-the-mouth kind. He just feels uncomfortable having a black guy in his neighborhood. However, if you asked him, he’d claim to not be a racist, claim to have black friends, and try to seem like a reasonable guy.

He follows Martin, hoping to scare him off, but not actively hoping for a fight; he genuinely wants to keep the peace. If Martin gets scared, well that’s OK: he’s got no business being in this part of town. A scuffle ensues and Martin is shot.

Is that murder, or even manslaughter?

Again, I don’t think so. In this case, if Zimmerman is guilty of something, it’s…I don’t know…reckless endangerment? Putting himself and another in a situation where only bad things could happen?

I didn’t follow the trial all that closely, but I will say that some people who followed the trial even less than I did were outraged at the verdict. I can understand this, on some level; if a travesty occurs (the shooting of Trayvon Martin was certainly a travesty) then people want justice; they may even want revenge. If Zimmerman wasn’t to blame, then who is? Saying “the system” or “society” or “endemic racial profiling” are the root causes of Martin’s death isn’t satisfying, because you can’t put those nouns behind bars and throw away the key and feel good about yourself. If no one gets blamed, then how does Trayvon Martin get justice?

Here are four ways Trayvon Martin could have gotten justice, or may still get justice:

- Florida’s inane stand-your-ground law gets repealed. That would be justice.

- Community watch volunteers stop carrying guns and instead call trained police professionals if they see suspicious behavior. That would be justice.

- Politicians stop listening to NRA lobbyists, and start listening to common sense: that would be justice.

- Zimmerman admits what he did was horribly bad judgment; pleads guilty to reckless endangerment; then performs 300 hours of community service as a sort of penance. (In the long run this outcome would have been better for Zimmerman than the not guilty verdict, because I suspect Zimmerman may be a pariah for the rest of his life. A little bit of remorse would have gone a long way.)

Anyway, all things considered, I think the jury did what 99% of juries would have done in this case, which was let Zimmerman go free. The prosecution did not succeed in proving their case. In retrospect, I think that going for a murder charge was ill-advised and entirely political; they should have aimed a little lower. Going for manslaughter from the start had a much better chance of success. Putting Zimmerman away for life on a murder rap isn’t justice; it’s revenge.

OK then. Feel free to agree, or rabidly disagree.

It doesn’t matter.

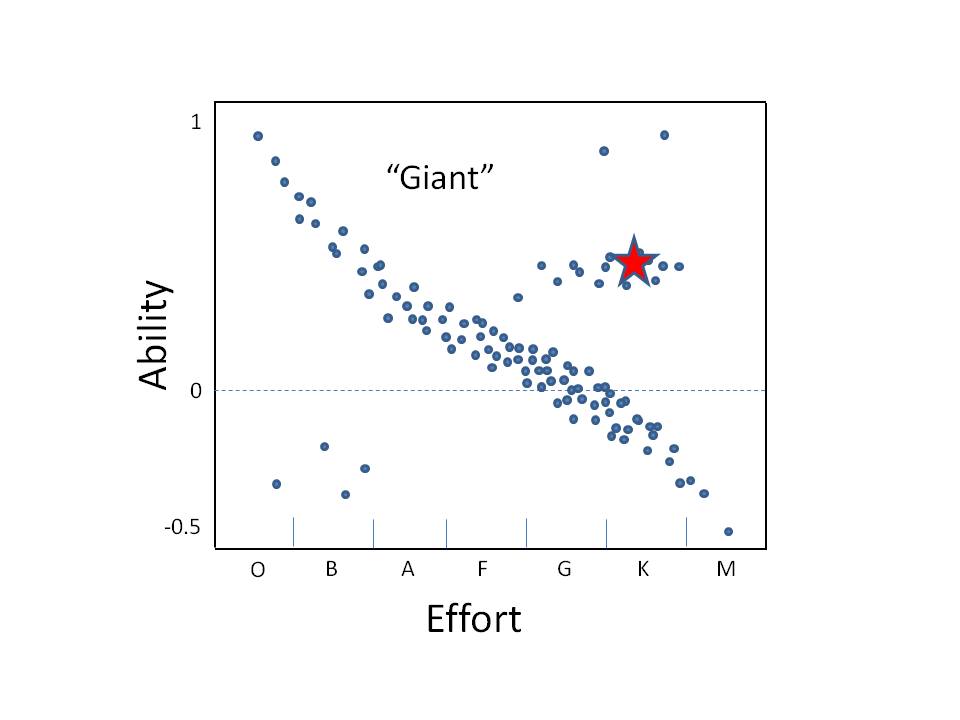

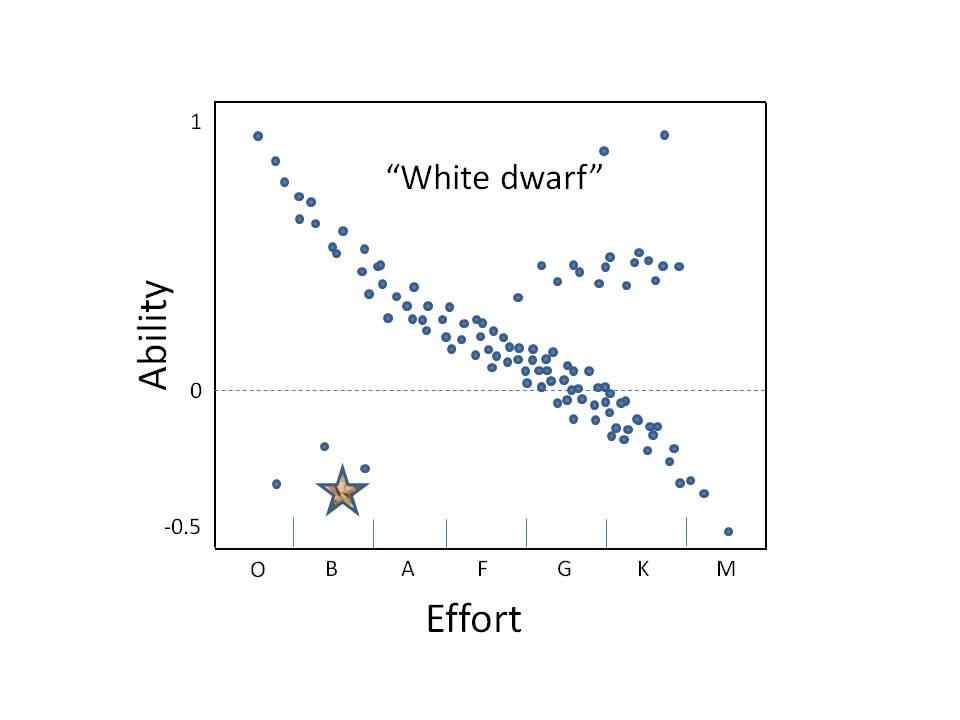

The Zimmerman case was just one case. One case, out of thousands of criminal cases in the U.S. every year. That is, the Zimmerman trial was just one data point.

I’ve talked about this before. You can’t really draw any conclusions about anything from one data point. And yet, people do it all the time. It’s a fallacy that probably has a name, but the name eludes me. But to most people, it’s not a fallacy. It has the weight of proof.

“I don’t believe in global warming.” [Katrina devastates New Orleans] “Wait, now I do!”

“I don’t think M. Night Shyamalan is a good director.” [Watches The Sixth Sense] “Wait, now I do!”

“I don’t think racial profiling is a real thing.” [Martin gets shot and his Skittles spill to the ground] “Wait, now I do!”

I hope all three of these arguments is equally absurd to you. If not, I think you lack the scientific mindset. Now, don’t get me wrong: I think global warming is real, and I think racial profiling is real. It’s just that you can’t make the case for those things with only one data point. (Indeed, the case of M. Night Shyamalan shows that one data point can lead you horribly astray: after the wonderful The Sixth Sense Shyamalan has directed six turkeys in a row.)

I do think that racism still pervades the country. I do think that whites get a different kind of justice than non-whites in our judicial system. I do think that our country is obsessed…in an unhealthy way…with small metal devices whose sole purpose is to kill other human beings. But I don’t believe any of these things solely because of a single data point. You have to look at the big picture, look at the data in aggregate. A preponderance of evidence is required to separate fact from fiction, truth from rumor, knowledge from urban legend. As much as politicians love to bring up pithy examples, tell symbolic anecdotes, those examples and anecdotes are really rather meaningless. Give me the data or go home.

And that is why the Zimmerman verdict is really rather meaningless. Not to the family of Trayvon Martin, of course; I feel for them and am very sorry for their loss. But as to what the trial says, in a larger context, about our society in general? It says nothing. A single data point says nothing. It cannot say anything; that’s a simple mathematical fact. It takes at least two points to make a line.

If you want to know how prevalent racism is, or how two “separate-but-equal” judicial systems pervade the U.S., or even whether putting guns in the hands of rent-a-cops endangers citizens, look at the data. Data, plural. Give Nate Silver a call. Don’t argue by colorful anecdote. And if you don’t have the hard data, at least have the courage to admit to yourself that what you believe is based on nothing.

That’s what I believe. And yes, it’s really just based on nothing. But I’m OK with that, because somewhere, hunched over a desk, Nate Silver is crunching all the numbers, and he’s still not a witch.

Read Full Post »